Limiting UIPinchGestureRecognizer Zoom Levels

Here is how to use a UIPinchGestureRecognizer and how to limit it's zoom levels on your custom views and content. My use case was for resizing images on a custom view. I wanted to prevent very large images and to prevent very small images. Very large images have a ton of pixilation and artifacts. While very small images are hard to touch and a user cannot do anything useful with them.

Basic Math

1. We know: currentSize * scaleFactor = newSize

2. Clamp the maximum scale factor using the proportion maxScale / currentScale

3. Clamp the minimum scale factor using the proportion minScale / currentScale

The code below assumes there is an instance variable CGFloat lastScale and that a view has been set for the UIPinchGestureRecognizer.

Sample Code

#import <QuartzCore/QuartzCore.h> // required for working with the view's layers

//....

- (void)handlePinchGesture:(UIPinchGestureRecognizer *)gestureRecognizer {

if([gestureRecognizer state] == UIGestureRecognizerStateBegan) {

// Reset the last scale, necessary if there are multiple objects with different scales

lastScale = [gestureRecognizer scale];

}

if ([gestureRecognizer state] == UIGestureRecognizerStateBegan ||

[gestureRecognizer state] == UIGestureRecognizerStateChanged) {

CGFloat currentScale = [[[gestureRecognizer view].layer valueForKeyPath:@"transform.scale"] floatValue];

// Constants to adjust the max/min values of zoom

const CGFloat kMaxScale = 2.0;

const CGFloat kMinScale = 1.0;

CGFloat newScale = 1 - (lastScale - [gestureRecognizer scale]); // new scale is in the range (0-1)

newScale = MIN(newScale, kMaxScale / currentScale);

newScale = MAX(newScale, kMinScale / currentScale);

CGAffineTransform transform = CGAffineTransformScale([[gestureRecognizer view] transform], newScale, newScale);

[gestureRecognizer view].transform = transform;

lastScale = [gestureRecognizer scale]; // Store the previous scale factor for the next pinch gesture call

}

}

Artwork Evolution 1.2.80 Released!

Download

Buy Artwork Evolution on the App Store and create wallpapers for iPhone, iPod Touch, and iPad!

New Features

- Coffee Table Photos: Touch, slide, and flick photos off the table!

- Updated main screen user interface

Video

httpvh://www.youtube.com/watch?v=xkwE8BRQsAo

Artwork Evolution on App Store

Artwork Evolution, my first iOS App is now available on the App Store for iPhone, iPod Touch, and iPad. It allows you to create complex abstract art with the touch of a finger. You can breed images together to create new images.

Artwork Evolution, my first iOS App is now available on the App Store for iPhone, iPod Touch, and iPad. It allows you to create complex abstract art with the touch of a finger. You can breed images together to create new images.

[caption id="attachment_1038" align="aligncenter" width="396" caption="Artwork Evolution on iPhone 4"]

Tutorial Video

httpvh://www.youtube.com/watch?v=_VZnFsnO4YY

Objective-C/C++ iPhone Build Failures

If you're working with Objective-C/C++ (i.e. mixing both languages) in an iPhone/Mac application you may come across some strange errors in the build process due to a configuration issue.

error: bits/c++config.h: No such file or directory

[caption id="attachment_1005" align="aligncenter" width="457" caption="Too many build failures"]

One of my projects, Texture Evolution was a Mac application that referenced a C++ Mac library. About 6 months ago I ran into an issue where my build would fail. It may have been related to an update to Xcode, but I'm not entirely sure. After a lot of time, frustration, Google'ing, and project configuration changes I came across a solution.

Today I ran into the same problem and couldn't quite remember how to fix it for my Doxygen Xcode documentation Target that references the C++ Mac library. I wasted more time trying to figure it out again, so here's the breakdown.

The Problem:

Using iostream.h or other STL from C++ and compiling with the Base SDK set to 10.4 and GCC 4.0.

/Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk/usr/include/c++/4.0.0/iostream:43:0 /Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk/usr/include/c++/4.0.0/iostream:43:28: error: bits/c++config.h: No such file or directory

/Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk/usr/include/c++/4.0.0/iosfwd:45:0 /Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk/usr/include/c++/4.0.0/iosfwd:45:29: error: bits/c++locale.h: No such file or directory

/Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk/usr/include/c++/4.0.0/iosfwd:46:0 /Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk/usr/include/c++/4.0.0/iosfwd:46:25: error: bits/c++io.h: No such file or directory

(11,000+ other errors)

The Solution:

Set the Base SDK to Mac OS X 10.5 and GCC to 4.2. You'll need to make changes to project using the library as well as the libraries Target/Project settings. When you make changes make sure the Target properties displays "All Configurations" (i.e. Debug/Release/Release Adhoc/Release AppStore) so that you fix it for all of your build types. Double check and make sure that your static libraries and your project Targets have matching configurations.

[caption id="attachment_1000" align="aligncenter" width="461" caption="Using Base SDK: Mac OS X 10.5 and GCC 4.2"]

Why?

It may be a simple configuration issue, but I'm not really sure. For some reason when I use GCC 4.0 it builds against arm and uses the iPhone SDK, but when I use GCC 4.2 it uses the Mac SDK. The library is explicitly targeting Mac OS X 10.4, but it doesn't seem to work when it's targeting GCC 4.0. Here's the comparison build output:

Base SDK Mac OS X 10.4, GCC 4.0

CompileC /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-CompileC /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/Objects-normal/armv6/Canvas.o ../../source/Canvas.cpp normal armv6 c++ com.apple.compilers.gcc.4_0

cd /Users/paulsolt/dev/ArtworkEvolution/Xcode/ArtworkEvolution

setenv LANG en_US.US-ASCII

setenv PATH "/Developer/Platforms/iPhoneOS.platform/Developer/usr/bin:/Developer/usr/bin:/usr/bin:/bin:/usr/sbin:/sbin"

/Developer/Platforms/iPhoneOS.platform/Developer/usr/bin/gcc-4.0 -x c++ -arch armv6 -fmessage-length=0 -pipe -Wno-trigraphs -fpascal-strings -O0 -Wreturn-type -Wunused-variable -isysroot /Developer/Platforms/iPhoneOS.platform/Developer/SDKs/iPhoneOS4.1.sdk -mfix-and-continue -gdwarf-2 -mthumb -miphoneos-version-min=3.2 -iquote /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/MacEvolutionLib-generated-files.hmap -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/MacEvolutionLib-own-target-headers.hmap -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/MacEvolutionLib-all-target-headers.hmap -iquote /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/MacEvolutionLib-project-headers.hmap -F/Users/paulsolt/dev/xcode_build_output/Debug-iphoneos -I/Users/paulsolt/dev/xcode_build_output/Debug-iphoneos/include -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/DerivedSources/armv6 -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/DerivedSources -fvisibility=hidden -c /Users/paulsolt/dev/ArtworkEvolution/Xcode/ArtworkEvolution/../../source/Canvas.cpp -o /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug-iphoneos/MacEvolutionLib.build/Objects-normal/armv6/Canvas.o

Base SDK Mac OS X 10.5, GCC 4.2

CompileC /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/Objects-normal/x86_64/Canvas.o /Users/paulsolt/dev/ArtworkEvolution/Xcode/ArtworkEvolution/../../source/Canvas.cpp normal x86_64 c++ com.apple.compilers.gcc.4_2

cd /Users/paulsolt/dev/ArtworkEvolution/Xcode/ArtworkEvolution

setenv LANG en_US.US-ASCII

/Developer/usr/bin/gcc-4.2 -x c++ -arch x86_64 -fmessage-length=0 -pipe -Wno-trigraphs -fpascal-strings -fasm-blocks -O0 -Wreturn-type -Wunused-variable -isysroot /Developer/SDKs/MacOSX10.5.sdk -mfix-and-continue -mmacosx-version-min=10.5 -gdwarf-2 -iquote /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/MacEvolutionLib-generated-files.hmap -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/MacEvolutionLib-own-target-headers.hmap -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/MacEvolutionLib-all-target-headers.hmap -iquote /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/MacEvolutionLib-project-headers.hmap -F/Users/paulsolt/dev/xcode_build_output/Debug -I/Users/paulsolt/dev/xcode_build_output/Debug/include -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/DerivedSources/x86_64 -I/Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/DerivedSources -fvisibility=hidden -c /Users/paulsolt/dev/ArtworkEvolution/Xcode/ArtworkEvolution/../../source/Canvas.cpp -o /Users/paulsolt/dev/xcode_build_output/ArtworkEvolution.build/Debug/MacEvolutionLib.build/Objects-normal/x86_64/Canvas.o

Notes:

GCC 4.2 is required for 10.5, if you try and use the Base SDK of 10.4 and GCC 4.2 you'll get this error.

GCC 4.2 is not compatible with the Mac OS X 10.4 SDK

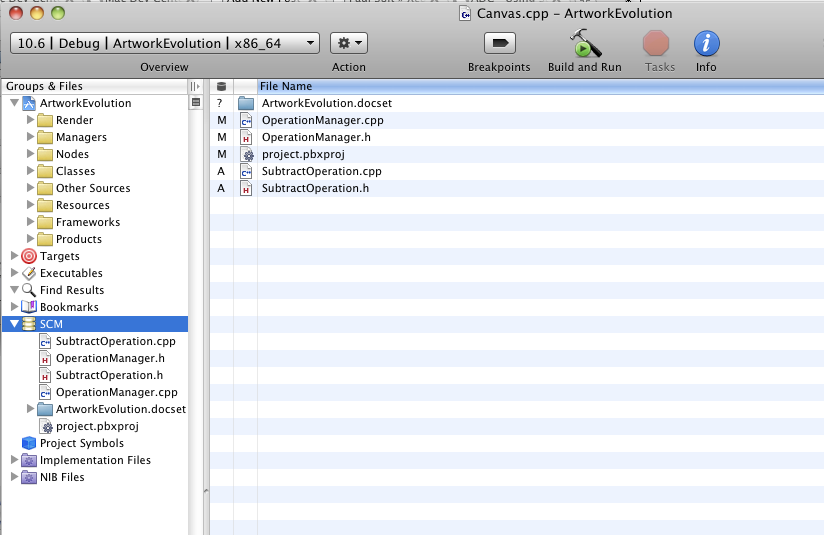

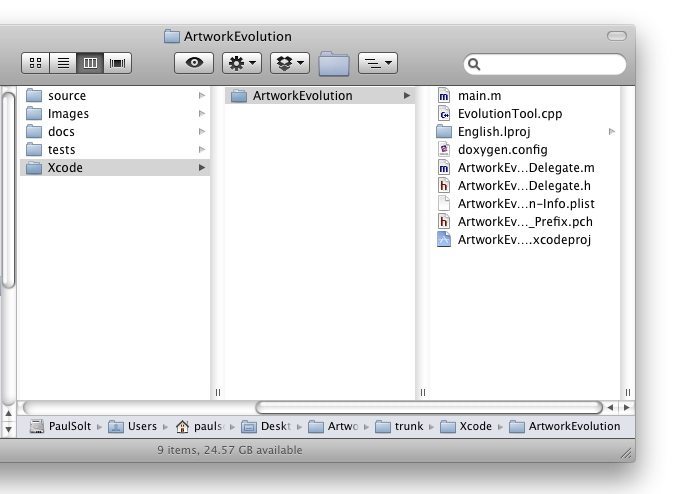

Xcode 3.2 SCM with SVN and Complex Project Directories

I started messing around with SCM in Xcode 3.2 using subversion and I had a minor road bump with getting SCM to see modified files outside the project directory. It turns out there's an easy solution, but it wasn't obvious.

I won't go into details on how to setup SVN or SCM for the project, so you can follow this Apple guide for Xcode3: http://developer.apple.com/mac/articles/server/subversionwithxcode3.html

Problem: I have common source that will be shared across different platforms in the source directory, above my Xcode projects directory. The default settings for SCM will look in your projects directory, but I need to look two directories up. (../../)

[caption id="attachment_523" align="aligncenter" width="432" caption="Complex Cross-platform Project Structure"]

[ad#Link Banner]

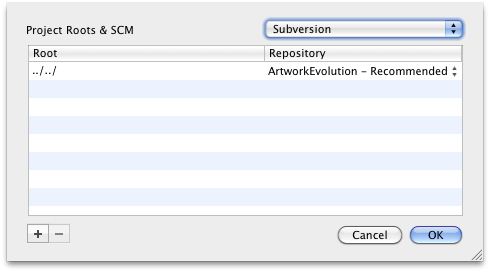

We need to modify the project settings for SCM and configure the roots.

- Double-click on the project name in the Groups & Files Pane and you'll get the Project Properties window.

- Click on Configure Roots & SCM in the Project Properties

- Set the Root to point above the project directory. In my example, the source folder is located two directories above the Xcode project. I set Roots to "../../"

- Select the SCM repository from one that was setup during the SCM configuration process. I noticed that setting the root will reset the repository, so make sure it doesn't change on you.

- Double-click SCM and we should be able to see any file under SVN version control in the parent directory two levels up. I can see changes to the directories source, images, Xcode, docs, and tests.

[caption id="attachment_531" align="aligncenter" width="456" caption="Project Properties"]

[caption id="" align="aligncenter" width="392" caption="Project Roots & SCM Settings"]

[ad#Large Box]

- SCM File View with All Subdirectories

More Settings

Another helpful setting is to turn on the SCM on the files view.

- Turn on the SCM on the Editor files view: Right-click on the view bar in the file view on the right side and enable SCM. Now you can see when files have been changed in the normal view outside the SCM view.

- View Flat Files: If you double-click on SCM in the Groups & Files pane you will get another window. On the bottom left side there is a button. Set it to "Flat" if you like to see just the files that changed without regard to where in the SVN repository they were.

[caption id="attachment_534" align="alignnone" width="459" caption="Viewing SVN File Changes"]

[ad#Large Box]

iPhone Unit Testing Explained - Part 1

Here's the first part of a multi-part iPhone Unit Testing Series. (Updated 3/31/12 with Xcode 4 testing)

For the second part of iPhone Unit Testing Explain - Part II

How comfortable are you on a bike without a helmet? Writing code without tests is like riding a bike without a helmet. You might feel free and indestructible for now, but one day you'll fall and it's going to hurt.

I can't start a new project without source control, it's one of my requirements. Personally, I feel very uncomfortable and exposed without something to track my code changes. In reality, I hardly need to revert changes, but the knowledge that I can go back to something else enables me to make bigger and more confident changes.

Testing should also be a requirement and it doesn't get enough attention in the classroom or on code projects. Part of the problem is that there is a lack of information and the high learning curve. Most tutorials provide the bare minimum and don't provide real world examples. There are two major hurdles that you'll need to overcome.

1. How do I use this testing framework?

Getting started with some new technology is always a daunting task. The only way you're going to learn is if you teach yourself. Make sure to free yourself from distractions and get a cup of coffee so you can think clearly. When there are lots of unit testing frameworks and you need to evaluate what your requirements are going to be. For beginners it's not always clear and you're going to have to sample the available options.

- C++: Boost Test Library, Google Test, CppUnit (C++ Unit Testing Roundup)

- Objective-C: OCUnit, GTM iPhone Unit Testing, GHUnit

[ad#Link Banner]

2. How do I write testable code?

Most computer science courses don't explain how to write testable code, they focus on the output matching. Students assume that if my code displays X and I'm trying to display X, then the code must be correct. Testable code can be hard to master, but it's worth the effort. There's two important aspects of unit testing that I've discovered.

a. A unit test, or a set of unit tests, validates that the function you just wrote works like you think it works. It takes microseconds for the computer to tell you if something works or not, and it takes you seconds or minutes to validate if it works. Your time is valuable, let computers do the grunt work of testing and you'll have more time to write code.

b. Unit tests force design testing. High-level designs don't translate into direct code without usability issues. As you write and test code you will find that a function should take X parameters instead of Y parameters, or that the function name doesn't match the functionality it provides. Take the time to fix your design and you'll have code that's easier to use and better documented. The sooner you fix design issues, the more time you'll have to work on features.

I still haven't answered how to write testable code. Getting started is a matter of baby steps; take two steps at a time, and soon you'll be walking.

a. Isolate the basic functions that have clear input and output. Write a test that provides a function with good input and bad input. Think about what additional functions you'll need to prove that the function you're testing works as expected. For example, in order to test a setter function, you'll need a getter function. As you write more tests, refactor common tasks into helper functions, so that you can spend more time testing functionality instead of writing boilerplate test code.

b. Make sure you document each function as you test it, if you don't write comments now, it's never going to happen. Documentation is best when the details of the function are fresh in your mind and you can explain the edge cases.

Not everything is easy to test, but don't be discouraged, since the tests you write will begin to validate that your code works as documented. You're making progress and it's going to get easier as you write more tests. Another benefit is that you'll have higher quality code that you can rely on into the future.

[ad#Large Box]

iPhone Testing

Over the past weekend I set out to integrate a unit test framework into my current project. I ended up testing three different frameworks: OCUnit, Google Toolbox for Mac (GTM) iPhone Unit Testing, and GHUnit. I will provide a brief overview and pros and cons to each framework and my final conclusion.

OCUnit (SenTesting)

Pros: Xcode integration (super easy), acceptance testing during builds, test debugging in Xcode 4 (Updated 3/31/12 for Xcode 4 improvements)

- Xcode 4+ has built in support for unit testing using the OCUnit testing (SenTesting) framework. It's easy to create a new test case, since there are Xcode test/target templates.

- Xcode can display test failures like syntax errors with the code bubbles during the build/run stages, which can facilitate acceptance testing. These errors will link to the file and unit test that is failing, which is very helpful in terms of usability.

[caption id="attachment_854" align="aligncenter" width="555"]

Cons: logic tests vs. application tests, device/simulator test limitations

There are far too many steps required to create the unit tests in Xcode 3.2.4. I'm not sure why more of the process isn't automated, maybe in Xcode 4? To provide test coverage I needed to create 3 additional test targets each with specific settings.Writing a test for something basic (addition) is trivial using OCUnit, but testing code (memory management) that may crash isn't. You need to follow a special set of instructions to get unit testing debugging work, otherwise you'll be scratching your head. (Link 1) (Link 2) (Link 3)- Apple created their own categories of tests: logic tests and application tests, but the distinction isn't clear. Logic tests are meant to test code that doesn't use any UI code, while Application testsare designed for testing UI related code. This sounds fine, but when you try to create unit tests, the code you're testing becomes very tightly coupled to the tests you can write.

- For example, logic tests are not run in an application, so code that would provide a documents or bundle directory for saving/loading may not work without refactoring the code.

- The biggest limitation is that logic tests only run using the iPhone Simulator and application tests only run on the device. Logic tests are not run when you build for the actual device, so you have to constantly switch between simulator/device to get acceptance test coverage. Running application tests only on the device is very slow, compared to running it in the iPhone Simulator.

[caption id="attachment_856" align="aligncenter" width="528"]

Google Toolbox for Mac (GTM): iPhone Unit Testing

Pros: Xcode integration, acceptance testing during builds, easy setup

- GTM iPhone unit testing provides code bubbles with test failures, like OCUnit, when building the target for the iPhone Simulator. The failure indicators are better than OCUnit, since it includes the error icon on the line of failure in GTM. However, the code bubbles do not appear when building for the device. The code bubbles can be clicked on to take you to the unit test that is failing. You can perform acceptance testing when building for the iPhone Simulator.

[caption id="attachment_850" align="aligncenter" width="540"]

- Compared to OCTest, GTM iPhone Unit Testing is a breeze to setup. You can write unit tests that target the UI and logic of your code within the same class.

[ad#Link Banner]

Cons: Documentation, output

- GTM iPhone unit testing was straightforward to setup using the google code guide. The documentation is a little sparse, but it provides enough to get going. I think pictures would help explain the process and help break up all the text. I found the following visual tutorial semi-helpful, but there's no definitive source.

- When building a test and running the script during the build phase there is a lot of extraneous output that can be overwhelming. Some of the output is visible in the previous code bubble screenshot and the screenshot below. The output is not formatted as nicely as the OCTest output.

[caption id="attachment_852" align="aligncenter" width="542"]

GHUnit

Pros: Device/simulator testing, easy debugging, easy setup, iPhone Test Result GUI, command line interface

- GHUnit has the easiest setup process of the three unit testing frameworks. It's based on GTM and it is capable of running OCUnit, GTM, and GHUnit test cases. The documentation is pretty good and there is a nice visual setup guide.

- GHUnit is easy to debug any test case without any additional setup. Place a breakpoint and run the test application in the simulator or on the device.

- GHUnit provides a way to run tests on the command line, if you want to integrate with continuous integration tools. (Hudson)

- The major selling point is that it provides a graphics user interface to display the results of each test, including test duration, if performance is important. The interface allows the user to switch between all tests and failed tests in a unit test application. Additionally, it drill into a test failure to display the unit test failure message and the stack trace. After using GHUnit, GTM Unit Testing leaves a lot to be desired beyond log output for visual feedback.

- The GUI enables a pleasant workflow where you can set breakpoints and re-run tests to try and isolate bugs in the test code. The workflow replaces the need to perform acceptance testing during the build process, because it is so much more interactive.

- On iPhone 4 it will resume on the All/Failed tests tab that you were last running/debugging. Note: There is a minor issue described below in the cons section.

[caption id="attachment_857" align="aligncenter" width="248"]

[caption id="attachment_858" align="aligncenter" width="248"]

[caption id="attachment_859" align="aligncenter" width="248"]

Cons: No acceptance testing, Documentation, minor GUI issues, Setup Time

- There is no way to run tests as part of the build process using GHUnit. It's a paradigm shift from the OCUnit and other similar unit testing frameworks that enable testing during the build process. However, you can use a continuous integration framework to run unit testing as you check in code to a central repository.

[ad#Link Banner]

- Documentation is good, but it could be better. The behaviors of the GUI are not clearly defined. Finding the GHUnitIOS.Framework was not clear in the documentation. I updated an article on github that addressed the documentation issue.

- There are some minor GUI issues, if you use the GUNIT_AUTORUN environment variable. If you switch to the Failed tests tab on the GUI, it will only run the failed tests. Running the subset is good when you're fixing an issue, but it can be confusing if you're adding a new test. The new test won't run until you switch back to the Alltests tab. Setting a breakpoint on the new test, will also do nothing, since it doesn't run.

- I'd like to see an additional button on the Failed tests tab that says "Run All Tests" and on the All tests tab a "Run Failed Tests" button. I think the additional buttons would make it clear that run doesn't always do the same thing.

[caption id="attachment_861" align="aligncenter" width="248"]

Conclusion

Update: 3/31/12 With Xcode 4, creating and debugging unit tests in Xcode is so easy, it's not worth the initial effort to integrate GHUnit, unless you need visual feedback on the device.

I would recommend using the built in Xcode 4 unit testing bundles and then add GHUnit after the fact, when you need visual feedback. GHUnit is compatible with the default testing available in Xcode 4.

The testing interface in GHUnit is the easiest to see test results and it doesn't require as much log output digging. I like the workflow I have when using GHUnit.

- Switch to the GHUnit Test Target

- Write unit test for new function

- Test new function (Command-R) and see it fails (Optional)

- Stub and implement new function

- Test implementation (Command-R). Repeat 4 until the test passes

- Repeat for next function

- Switch back to the Application Test Target

Updated: (3/31/12) With the release of Xcode 4 and more integrated testing, using OCUnit/SenTest is a lot easier to get started. I will be providing a walk through on setting up Xcode 4 and working with bundle resources in the next part.

(Part II - working with unit tests in Xcode 4)

[ad#Large Box]

iOS: Converting UIImage to RGBA8 Bitmaps and Back

Edited 8/24/11: Fixed a bug with alpha transparency not being preserved. Thanks for the tip Scott! Updated the gist and github project to test transparent images.

Edited 12/13/10: Updated the code on github/gist to fix static analyzer warnings. Changed a function name to conform to the Apple standard.

When I started working with iPhone I was working with Objective-C and C++. I created a library in C++ and needed access to a bitmap array so that I could perform image processing. In order to do so I had to create some helper functions to convert between UIImage objects and the RGBA8 bitmap arrays.

Here are the updated routines that should work on iPhone 4.1 and iPad 3.2. The iPhone 4 has a high resolution screen requires setting a scaling factor for high resolution images. I've added support to set the scaling factor based on the devices mainScreen scaling factor

UPDATE: 9/23/10 My code to work with the Retina display was incorrect, it ran fine on iPad with 3.2, but it didn't do anything "high-res" on iPhone 4. I was using the following:

__IPHONE_OS_VERSION_MAX_ALLOWED >= 30200

but it isn't safe, when I run it for a universal App 4.1/3.2 it will always return 40100, and the expression didn't make sense. (Side Note) I took this check from Apple's website when iPad 3.2 was actually ahead of iPhone 3.1.X, but that doesn't help with iPhone 4.1 being ahead of iPad 3.2.

The issue with iPad is that the imageWithCGImage:scale:orientation: selector doesn't exist on iOS 3.2, most likely it will on iOS 4.2, so the following code should be safe. Some methods in iOS 4.1 don't exist in iOS 3.2, so you need to check to see if a newer method exists before trying to execute it. There are two methods you can use depending on the class/instance (+/-) modifier on the function definition.

+ (BOOL)respondsToSelector:(SEL)aSelector // (+) Class method check

+ (BOOL)instancesRespondToSelector:(SEL)aSelector // (-) Instance method check

imageWithCGImage:scale:orientation is a class method, so we need to use respondsToSelector: The correct code to scale the CGImage is below:

if([UIImage respondsToSelector:@selector(imageWithCGImage:scale:orientation:)]) {

float scale = [[UIScreen mainScreen] scale];

image = [UIImage imageWithCGImage:imageRef scale:scale orientation:UIImageOrientationUp];

} else {

image = [UIImage imageWithCGImage:imageRef];

}

[ad#Large Box]

It might help if there was some images to explain what's happening if you don't use this imageWithCGImage:scale:orientation: on the iPhone 4 with the correct scale factor. It should be 2.0 on Retina displays (iPhone 4 or the new iPod Touch) and 1.0 on the 3G, 3GS, and iPad. float scale = [[UIScreen mainScreen] scale]; will provide the correct scale factor for the device. The first image has jaggies in it, while the second does not. The third image, an iPhone 3G/3GS, also does not have jaggies.

[caption id="attachment_697" align="aligncenter" width="451" caption="iPhone 4 with default scale of 1.0 causes the image to be enlarged and with jaggies."]

[caption id="attachment_698" align="aligncenter" width="451" caption="iPhone 4 with scaling of 2.0 makes the image half the size and removes the jaggies"]

[caption id="attachment_692" align="aligncenter" width="414" caption="iPhone 3G/3GS with scaling set to 1.0"]

I hope it helps other people with image processing on the iPhone/iPad. It's based on some previous tutorials using OpenGL, which I fixed (memory leaks) and modified to work with unsigned char arrays (bitmap).

[ad#Link Banner]

Grab the two files here or the sample Universal iOS App project:

Example Usage:

NSString *path = (NSString*)[[NSBundle mainBundle] pathForResource:@"Icon4" ofType:@"png"];

UIImage *image = [UIImage imageWithContentsOfFile:path];

int width = image.size.width;

int height = image.size.height;

// Create a bitmap

unsigned char *bitmap = [ImageHelper convertUIImageToBitmapRGBA8:image];

// Create a UIImage using the bitmap

UIImage *imageCopy = [ImageHelper convertBitmapRGBA8ToUIImage:bitmap withWidth:width withHeight:height];

// Display the image copy on the GUI

UIImageView *imageView = [[UIImageView alloc] initWithImage:imageCopy];

// Cleanup

free(bitmap);

Below is the full source code for converting between bitmap and UIImage:

ImageHelper.h

ImageHelper.m

[ad#Large Box]

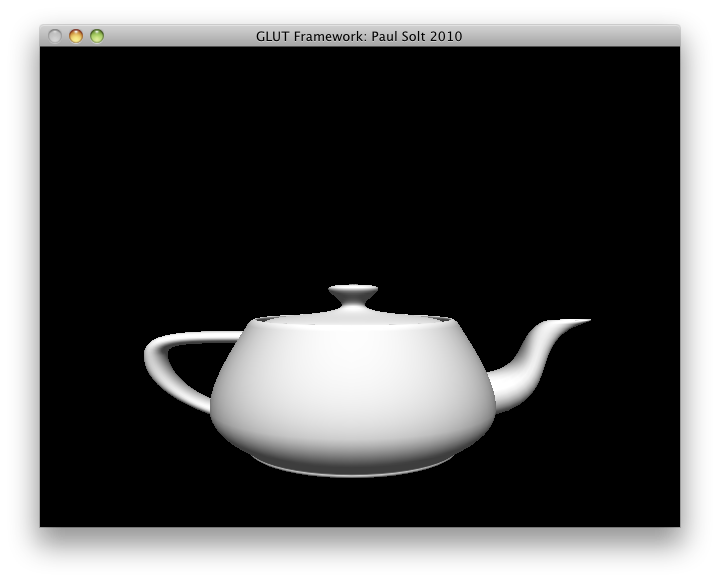

GLUT Object Oriented Framework on Github

In 2009 I took a Computer Animation course at @RIT I created an object-oriented C++ wrapper for GLUT. The idea was to create a set of classes that could be reused for each of the separate project submissions. The main class wraps around the GLUT C-style functions and provides a class that can be inherited from, to provide application specific functionality. The idea was to make the boiler plate code disappear and make it easier for novice programmers to get an animated graphics window in as few lines as possible. Only four lines of code are needed to get the window running at 60 frames per second. You can subclass the framework and implement your own OpenGL animation or game project.

Edit (8/22/10): You don't need to use pointers, I've updated the code example with working code.

// main.cpp

#include "GlutFramework.h"

using namespace glutFramework;

int main(int argc, char *argv[]) {

GlutFramework framework;

framework.startFramework(argc, argv);

return 0;

}

[ad#Large Box]

The code uses a cross-platform (Windows/Mac tested) timer to create a constant frame-rate, which is necessary for animation projects. It's under the MIT License, so feel free to use it as you see fit. http://github.com/PaulSolt/GLUT-Object-Oriented-Framework

An Xcode 3.1 and Visual Studio 2010 project is hosted on github to support Mac and Windows. There is no setup on the Mac, but Windows users will need to configure GLUT. I plan on posted tutorials on how to get setup on both platforms. For now, look at the resources section below.

[caption id="attachment_617" align="aligncenter" width="504" caption="Animated teapot"]

Resources:

- Previous Post: http://paulsolt.com/2009/07/openglglut-classes-oop-and-problems/

- GLUT for Windows: http://www.xmission.com/~nate/glut.html

- Visual Studio and GLUT setup: http://www.cs.uiowa.edu/~cwyman/classes/common/howto/winGLUT.html

- Old Post on GLUT in Eclipse: http://www.paulsolt.com/GLUT/

[ad#Large Box]

C++ Logging and building Boost for iPhone/iPad 3.2 and MacOSX

In my effort to write more robust and maintainable code I have been searching for a cross-platform C++ logging utility. I'm working on a C++ static library for iPhone/iPad 3.2/Mac/Windows and I needed a way to log what was happening in my library. Along the way I was forced to build Boost for iPhone, iPhone Simulator, and the Mac.

Why logging?

Mobile devices lack a console when detached from a development machine, so it's hard to track down issues. I needed a system that could log at multiple levels (Debug1, Debug2, Info, Error, Warning) and be thread safe. Multiple logger levels allow a developer to turn up/down the detail of information that is stored, which in turn affect performance with I/O writes. A developer with logging information can better track down crashes and other issues during an applications lifetime.

Why Boost Logger Library v2?

I struggled trying to get a logger working. After many failed attempts with Pantheios, log4cxx, log4cpp, and glog, I settled on the Boost Logger Library v2 because I was able to "compile" for iPhone/iPad 3.2 and Mac OSX. Most of the loggers required other dependencies that would need to be rebuilt for iPhone and didn't directly support iPhone.

The Boost Logger is all header files so it doesn't require "compiling," which made it much easier to get working. However, it does require a few Boost libraries that need to be compiled. The Boost Logging needs the following libraries: filesystem, system, and threading depending on what functionality is used.

Step 1: Building Boost for iPhone/iPad and iPhone Simulator 3.2

A few Boost libraries need compiling for the iPhone/iPad and the iPhone Simulator in order to link against the Boost Logger. Matt Galloway provided a demo on how to compile Boost 1.41/1.42 for iPhone/iPhone Simulator. Here are the steps I used for Boost 1.42 based on his tutorial.

[ad#Large Box]

- Get Boost 1.42

- Extract Boost:

- Create a user-config.jam file in your user directory (~/user-config.jam) such as /Users/paulsolt/user-config.jam with the following. (Note: this config file needs to be rename or moved during the MacOSX bjam build)

- Make sure the file boost_1_42_0/tools/build/v2/tools/darwin.jam has the following information:

- Change directories to the Boost directory that you downloaded:

- Run the following commands to compile the iPhone and iPhone Simulator Boost libraries. I only need filesystem, system, and thread to be use Boost logging for the iPhone, so I don't build everything. Run ./bootstrap.sh --help or ./bjam --help for more options. I built the binaries to a location in my development folder to include in my project dependencies.

- Update: Create a universal Boost Library using the lipo tool. In this example I'm assuming the binaries that were created have the following names. The names from the bjam generation will be different, based on your own configuration.End Update

- I'm working on a cross-platform project and my directory structure looks like the following structure. I copied the include and lib files for iPhone and iPhone Simulator into the appropriate directories. The dependency structure allows me to checkout the project on another machine and have relative references to Boost and other dependencies.

- Download the Boost Logging Library v2 and unzip it.

- Copy and paste the logging folder into each include/boost folder for iPhone and iPhone Simulator dependency folders like in my directory structure. After you unzip the header files are located in the folder logging/boost/logging.

tar xzf boost_1_42_0.tar.gz

~/user-config.jam

using darwin : 4.2.1~iphone

: /Developer/Platforms/iPhoneOS.platform/Developer/usr/bin/gcc-4.2 -arch armv7 -mthumb -fvisibility=hidden -fvisibility-inlines-hidden

:

: arm iphone iphone-3.2

;

using darwin : 4.2.1~iphonesim

: /Developer/Platforms/iPhoneSimulator.platform/Developer/usr/bin/gcc-4.2 -arch i386 -fvisibility=hidden -fvisibility-inlines-hidden

:

: x86 iphone iphonesim-3.2

;

tools/build/v2/tools/darwin.jam

## The MacOSX versions we can target.

.macosx-versions =

10.6 10.5 10.4 10.3 10.2 10.1

iphone-3.2 iphonesim-3.2

iphone-3.1.3 iphonesim-3.1.3

iphone-3.1.2 iphonesim-3.1.2

iphone-3.1 iphonesim-3.1

iphone-3.0 iphonesim-3.0

iphone-2.2.1 iphonesim-2.2.1

iphone-2.2 iphonesim-2.2

iphone-2.1 iphonesim-2.1

iphone-2.0 iphonesim-2.0

iphone-1.x

;

cd /path/to/boost_1_42_0

./bootstrap.sh --with-libraries=filesystem,system,thread

./bjam --prefix=${HOME}/dev/boost/iphone toolset=darwin architecture=arm target-os=iphone macosx-version=iphone-3.2 define=_LITTLE_ENDIAN link=static install

./bjam --prefix=${HOME}/dev/boost/iphoneSimulator toolset=darwin architecture=x86 target-os=iphone macosx-version=iphonesim-3.2 link=static install

[ad#Link Banner]

lipo -create libboost_filesystem_iphone.a libboost_filesystem_iphonesimulator.a -output libboost_filesystem_iphone_universal.a

lipo -create libboost_system_iphone.a libboost_system_iphonesimulator.a -output libboost_system_iphone_universal.a

lipo -create libboost_thread_iphone.a libboost_thread_iphonesimulator.a -output libboost_thread_iphone_universal.a

|-ArtworkEvolution

|---Xcode

|-----BoostLoggingTest

|---dependencies

|-----iphone

|-------debug

|-------release

|---------include

|-----------boost

|---------lib

|-----iphone-simulator

|-------debug

|-------release

|---------include

|-----------boost

|---------lib

|-----macosx

|-------debug

|-------release

|---------include

|-----------boost

|-----------libs

|-----win32

|---docs

|---source

|---tests

Step 2: Creating the Xcode Project

With the iPhone and iPhone Simulator Boost libraries in hand we're ready to make an Xcode project. Due to the difference in the iPhone and iPhone Simulator libraries we'll need to make two targets. One will build linking against the iPhone Boost libraries (arm) and the other against the iPhone Boost Simulator libraries (x86).

Update: You don't need to create two targets, as we can use the lipo tool to make a universal iPhone/iPhone Simulator library file. The universal library file can be shared between iPhone and iPhone Simulator build configurations. See the instructions for using lipo to create the universal library files in the previous section. However, I will keep the two target instructions up as an alternate approach for Xcode project development, if you choose not to use the lipo tool.

End Update

[ad#Link Banner]

1. Create a new iPhone project (view based)

2. There will be two targets: "BoostLoggingTest Device" and "BoostLogging Test Simulator" each will reference different headers and libraries. Duplicate the starting target and rename each target respectively.

[caption id="attachment_566" align="aligncenter" width="492" caption="Duplicate target to make iPhone/iPhoneSimulator targets"]

3. Add the libraries that we compiled into two groups: device and simulator under Resources. Right-click on the group "Simulator" or "Device" and select "Add Existing Files". Search for the library .a files that you copied into the iphone and iphone-simulator directories. These resources should be added relative to the project folder.

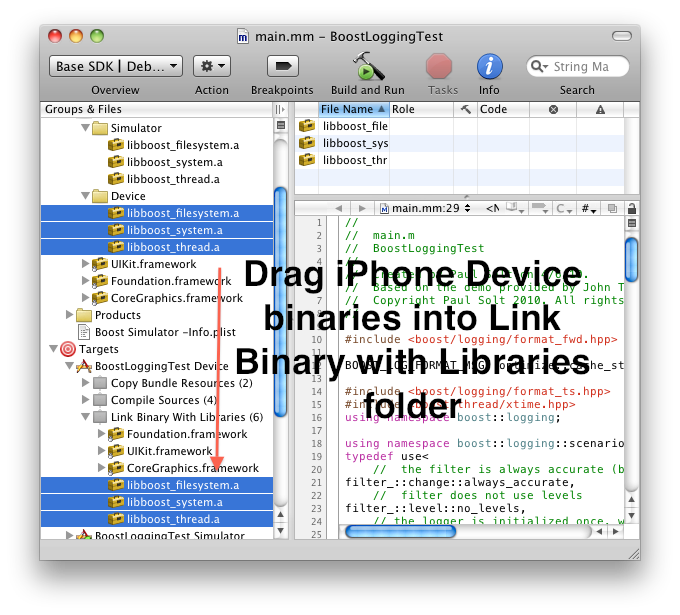

4. Drag the appropriate libraries to each Target. We need two targets since the architecture is different on the iPhone device (arm) versus the iPhone Simulator (Intel x86).

[caption id="attachment_569" align="aligncenter" width="476" caption="Drag the device libraries to the device target."]

[caption id="attachment_570" align="aligncenter" width="476" caption="Drag simulator dependencies to the iPhone simulator target"]

5. Add the "Header Search Path" for each target. For me the relative path will be two directories up from the Xcode project folders: ../../dependencies/iphone/release/include and ../../dependencies/iphone-simulator/release/include. Right-click on each Target in the left pane and click on "Get Info" -> Build -> Type "Header" in the search field -> Edit the list of paths.

[caption id="attachment_571" align="aligncenter" width="512" caption="Add the Device Target Header Search path for the boost libraries"]

[caption id="attachment_572" align="aligncenter" width="518" caption="Add the simulator targets Header Search Paths"]

6. Change the base SDK of each target. For the Device you need to use iPhone Device 3.2 and the Simulator Target needs iPhone Simulator 3.2 or later.

[caption id="attachment_573" align="aligncenter" width="431" caption="Set the Device Target to iPhone Device 3.2"]

[caption id="attachment_574" align="aligncenter" width="431" caption="Set the Simulator Target to iPhone Simulator 3.2"]

7. Now you have two different targets. One is for the iPhone Device and the other is for the iPhone Simulator. We did this because we built separate binaries for Boost on the iPhone (arm) and simulator (x86) platforms.

8. Set the project's Active SDK to use the Base SDK (top left of Xcode). Now it will automatically choose the iPhone Device or iPhone Simulator based on the Base SDK of each Target you select.

9. Logging on the iPhone requires that we use the full path to the file within the application sandbox. Use the following Objective-C code to get it:

[ad#Link Banner]

NSString *docsDirectory = [NSSearchPathForDirectoriesInDomains(NSDocumentDirectory, NSUserDomainMask, YES) objectAtIndex:0];

NSString *path = [docsDirectory stringByAppendingPathComponent:@"err.txt"];

const char *outputFilename = [path UTF8String];

10. I modified one of the Boost Logging samples to use the full file path on the iPhone. Rename the main.m as main.mm to use Objective-C/C++ and copy paste the following: main.mm code

11. If everything compiled and ran on the Device you can get the application data from the Xcode Organizer (Option+Command+O) Navigate to Devices and then look in Applications for the test application. Just drag the "Application Data" to your desktop to download it from the device. Your logs should appear in the Documents folder.

Part 3: Build Boost for Mac OS X 10.6 - 4 way fat (32/64 PPC and 32/64 Intel)

1. Build boost for Mac OS X. Note: If you setup the user-config.jam file for iPhone Boost build, rename or move the file to a different folder than your home directory, otherwise ignore this command.

mv ~/user-config.jam ~/user-config.jam.INACTIVE

cd /path/to/boost_1_42_0

./bootstrap.sh --with-libraries=filesystem,system,thread

./bjam --prefix=${HOME}/dev/boost/macosx toolset=darwin architecture=combined address-model=32_64 link=static install

2. Copy the output into your dependency structure and add the Boost Logging Library headers into the include/boost folder. (Same procedure as with iPhone)

3. Setup a Xcode project or target with the appropriate header search path, Boost Mac OSX libraries in the same way we setup the iPhone Xcode project.

Note: If you get warnings about hidden symbols and default settings open the Xcode project for and make sure that the "Inline Methods Hidden" and "Symbols Hidden by Default" are unchecked. Clicking on/off might fix any Xcode warnings.

References:

- http://iphone.galloway.me.uk/2009/11/compiling-boost-for-the-iphone/

- http://brockwoolf.com/blog/compile-and-use-boost-libraries-in-xcode-visual-studio

[ad#Large Box]

Artwork Evolution

I presented at BarCamp #5 Rochester http://barcamproc.org/ at RIT on my ArtworkEvolution project. Here are my presentation slides. ArtworkEvolution-Barcamp

I evolved images during the presentation and these are some of the results:

[caption id="attachment_550" align="aligncenter" width="448" caption="Curved Sky"]

[ad#Link Banner]

[caption id="attachment_551" align="aligncenter" width="448" caption="TV"]

[caption id="attachment_553" align="aligncenter" width="448" caption="Pillar"]

[ad#Large Box]

iPad Revolution

A lot of people have been talking about the iPad. Here are my opinions on the future of iPad, computing, and entertainment.

The iPad is set to revolutionize how we interact with multimedia content and computers. There are a number of reasons that make the hardware and software standout. First and foremost, it is affordable cutting-edge technology. The $499 price point means that it is not out of reach for average consumers who are interested in an updated “all-in-one” computing device. All 9.7 inches of the screen are multi-touch, which will allow software developers to create very interactive applications. Star Trek, Avatar, and other science fiction movie computer interfaces can finally be realized on a large multi-touch screen. The device is connected, which allows the consumer to use it anywhere. Lastly, the device will provide the ultimate responsive user experience.

Home Entertainment Revolution

Apple now has the ability to revolutionize the home entertainment market. They are provided a multi-media portal, which will change the way we use our TV’s, computers, and music players. Imagine controlling a TV from the couch without attaching any wires. A user might want to watch “Batman Begins” on their 42” HDTV. A few touches will open iTunes and start the movie. I mentioned the TV, how does that fit into the picture? The movie streams wirelessly in HD from the couch to the 42” TV. Gone are all the cables, remotes, and hassles. Don’t bother with power cable, since the device will play content for 10 hours straight. The entertainment cabinet can be cleaned out. Throw out the VCR, CD player, DVD player, Blue-Ray player, cable TV, satellite TV, and digital antennas because they are not needed. Apple will be the one stop remote control into all media content and it will be seamless to use and control.

Affordable Technology

A few years back, in 2007, Amazon set out to take over the digital books arena. They did a pretty good job at providing access to books, but that is about all they did. The Kindle DX costs $489 and is just a digital book reader. It has limited processing power and storage space. The main attraction is the e-ink technology that is supposed to be easier to read. Overall, the device is nice, but is very limited in the target audience and lacks multi-media capabilities like an iPhone. Apple worked hard to set the price point of the iPad as close as possible to the Kindle, because they are directly competing with Kindle’s e-book market. For $10 more one can get a fully color display that can play videos, music, games, display e-books, and run applications. Apple did a wonderful job in selecting a set of features that could be combined for a relatively low price point. The device is slightly more expensive than other eBook readers and net books, but not overly expensive.

[ad#Large Box]

Large Multi-Touch Screen

For the longest time computers were something that required skill to use. However, this learning steep learning curve is almost no longer the case with the iPhone, iPod Touch, and iPad. The iPhone revolution brought capacitive multi-touch screens to the public. In English this means that a user just touches, not “presses,” the screen to perform actions. iPad is riding on that revolution wake and it is taking it step further by increasing the size of the screen. This technology is not foreign; it is mainstream and it is here to stay because it works. If a user knows how to use an iPhone or a laptop track pad then the transition is smooth. The touch screen is key, because it allows people to interact with a device just like they might interact with a microwave or a washing machine. A user physically touches, taps, and slides controls around that directly mirror the physical world. The iPad is a natural user interface and it is what most people what, but do not know how to ask for.

Software is Key

The main attraction with any working piece of hardware is software. People want to use a piece of hardware that is customizable. At any given point the device can assume different roles, because it was built to be extensible. In one instant it is an email program, movie player, music player, and then an entire college library. Apple has created a platform that provides many inputs and outputs that software developers can hook into to provide new and novel user experiences. The software development kit (SDK) has given developers direct access to technology that was locked down or too expensive to use. Developers can use a digital compass, accelerometer, multi-touch screen, microphone, and motion sensors to interact with a user in astonishing ways.

Connected

The iPhone provided the all-in-one experience because it can double as a music player, movie player, email program, Internet browser, and eBook reader. It was small, but it had the ability to execute each of those tasks. It has those abilities because of the Wi-Fi and 3G data connections. These connections make it possible to see content beyond the walls of a single hard drive. It provides a much richer experience to user. The iPad takes these same tasks and now makes it better by providing a bigger experience. Users can use these connections in a larger form factor and can be more productive. For most users a simple Wi-Fi connection will be all they need from the couch in the living room. Some users might be active and on the go, so they will need a 3G wireless connection. Apple has realized this connection issues and separated both technologies to reach different consumers needs. Users can get the Wi-Fi by itself, or combine Wi-Fi and 3G if they need to always have a connection the the internet.

[ad#Link Banner]

User Experience

Users want fast responsive devices, not sluggish devices. A lot of users complain that they cannot run multiple applications (multi-tasking) on the iPhone, but what they do not realize is what they have to give up for multiple applications. Running anything in parallel on a mobile device means that it is dividing computing resources and power among applications that are invisible in the background. These resource hogs will slow a device down and drain a battery.

Traditional multi-tasking is not what users want. Apple supports multi-tasking, but only to first party applications. In restricting access, Apple has complete control of the user experience. Third-party multi-tasking is not supported for a few reasons.

- Window’s Task Manager is a power user feature that is unnecessarily complicated. On a Windows Mobile 6.x device, task manager is a terrible experience. For example, pressing the ‘X’ on an application is not guaranteed to close the application. The button may only minimize the application, in which case it is still using computing resources and draining the battery. The ability to manage open applications is a power user feature on a mobile device and should be hidden from a typical user.

- What is the difference between running an application in the background and running an application one at a time if the transition from one application to the next is fast and seamless? Does the experience have to differ solely from a technicality? iPhone applications can save state from the last thing they were doing when they are closed. For example, if a user is composing an email about a trip on an iPad. They need weather information and decide to check the weather with the following steps.

- Press the home button.

- Touch the weather application.

- Press the home button.

- Touch the email application.

- Resume composing the email with the updated weather knowledge.

- Running applications in the background allows companies to directly compete with Apples multimedia business. iTunes is one of the few applications like Mail and Messages that run in the background. A user can play music through iTunes while using different applications. If a user could use Pandora for music in the background, then they would have a smaller reason to stay on the iTunes platform. I do not see Apple changing this policy, since it is not in their best interest.

Conclusion

The iPad will simplify the experience to download new movies, games, music, books, and utility applications. There is no doubt in my mind that Apple will continue to innovate on this new iPad platform to further simplify and connect the multimedia experience in every persons home. The iPad is magic and just works.

[ad#Large Box]

Texture Evolution

For my Genetic Algorithms class I decided to work based on the work of Karl Sims. I have built a system that can evolve images based on "user natural selection" over a period of time. The user plays god and can select those images that will live and die to produce new images. Here's a fun sample of images:

[caption id="attachment_398" align="aligncenter" width="448" caption="Curtains"]

[caption id="attachment_396" align="aligncenter" width="448" caption="Waves"]

[caption id="attachment_394" align="aligncenter" width="448" caption="Loops"]

[ad#Large Box]

[caption id="attachment_393" align="aligncenter" width="448" caption="Logo"]

[caption id="attachment_392" align="aligncenter" width="448" caption="Squirley"]

[caption id="attachment_391" align="aligncenter" width="448" caption="Hungry Curves"]

[caption id="attachment_389" align="aligncenter" width="448" caption="A Future Road"]

[ad#Link Banner]

[caption id="attachment_388" align="aligncenter" width="448" caption="Wallpaper"]

[caption id="attachment_387" align="aligncenter" width="448" caption="Pipes"]

[caption id="attachment_386" align="aligncenter" width="448" caption="Peace"]

[caption id="attachment_385" align="aligncenter" width="448" caption="Planet Rings"]

[ad#Large Box]

iPhone Development with OpenGL

Here's my second presentation on iPhone Development at RIT for the Computer Science Community (CSC).If you enjoyed it let me know. I decided to look into graphics and OpenGL for the presentation.

Slides: iPhone Development II - Paul Solt

Demo: Triangle Demo: OpenGL ES on iPhone

[caption id="attachment_372" align="aligncenter" width="290" caption="OpenGL ES Triangle Demo"]

The first demo is a basic OpenGL ES iPhone project using vertex/color arrays and an orthogonal view. It's based on the tutorials from Jeff LaMarche This is the starting point for any iPhone OpenGL ES project. It'll give you a window that you can draw in and manipulate OpenGL state. Use Jeff's xcode project template to make this process painless and easy to get started. It's easier than using GLUT!

Demo: Cocos2D for iPhone: Cocos2D iPhone Graphics Demo

[caption id="attachment_368" align="aligncenter" width="554" caption="Cocos2D Demo for iPhone"]

A 2D graphics package to aid graphical applications on the iPhone. It provides some really neat animation support along with project templates to make setup a breeze. Cocos2D comes with two separate physics packages that you can incorporate into your game. The demo shows an animation trigger when the user pressed on the screen. Animations can be built from simple actions and combined to create a complex animation. It's perfect for a platformer.

[ad#Link Banner]

Demo: Raytracing on the iPhone

[caption id="attachment_382" align="aligncenter" width="290" caption="Raytracing with Photon Mapping"]

I ported my computer graphics II ray tracer from OpenGL/GLUT to OpenGL ES on the iPhone. The performance is very slow on the iPhone 3G (133-230 seconds), but that's to be expected with the given hardware. In the simulator it can render a scene in about 15 seconds. This demo shows how the performance of the actual device is vastly different from the iPhone simulator. I'm sure I can rework portions of the ray tracer to be more efficient, but that wasn't the goal of the quick port.

Resources:

- OpenGL: "The Red Book" OpenGL(R) Programming Guide: The Official Guide to Learning OpenGL(R), Version 2.1 (6th Edition)

- OpenGL ES Tutorials: http://iphonedevelopment.blogspot.com/2009/05/opengl-es-from-ground-up-table-of.html

- Cocos2D: http://code.google.com/p/cocos2d-iphone/

[ad#Large Box]

iPhone Development Talk

Today I gave a presentation on iPhone Development at RIT for the Computer Science Community (CSC).If you enjoyed it let me know. I'm looking into starting an informal iPhone Dev workshop for more topics.

Here are the slides and Xcode projects:

Slides: iPhone Development - Paul Solt

1. Demo: Hello World Pusher: Foo2

2. Demo: Touch Input: Stalker

3. Demo: Robot Remote Control: See my previous post

*The Touch Input demo was based on a demo given during the Stanford iPhone courses available on iTunes here.

Resources:

- Cocoa Programming for Mac OS X by Aaron Hillegass (Third Edition)

- Stanford iPhone Course (cs193p.stanford.edu)

- Search “iPhone Application Programming” in iTunes

- Beginning iPhone 3 Development: Exploring the iPhone SDK by Jeff LaMarche

- iPhone Dev Center

[ad#Large Box]

iPhone Player/Stage Remote Control

Here's the iPhone Player/Stage Remote Control project! There's a .pdf that describes how to setup Xcode in the .zip file.

[caption id="attachment_456" align="aligncenter" width="539" caption="Controlling a Robot over Wi-Fi"]

[caption id="attachment_455" align="aligncenter" width="496" caption="A Virtual Robot in a Virtual World"]

The goal of this project was to use the Player/Stage robotics code on the iPhone to communicate and control robots. I discuss how to setup the Xcode development environment. There are two example Xcode projects. The first one is an Objective-C project that wraps around the C++ Player/Stage code. The second project is a very primitive C++ program running on the iPhone without any UI. Both of these Xcode projects are fully documented and will serve as a starting point to iPhone Player/Stage development.

iPhone Player/Stage Remote Control Project: iPhonePlayerStage

Feel free to ask questions and let me know how you use the code.

[ad#Large Box]

iPhone Remote Control Samples

Get ready for an iPhone robot remote control. I'm working on creating a couple samples and documentation. Check out the video from Imagine RIT: http://www.youtube.com/watch?v=MOxTV41Lqac

Here's the link to my follow-up post: iPhone Player/Stage Sample Projects

OpenGL/GLUT, Classes (OOP), and Problems

Update: 8/22/10 Checkout the updated framework and post: http://paulsolt.com/2010/08/glut-object-oriented-framework-on-github/ I created a C style driver program that used OpenGL/GLUT for my computer animation course projects. It worked fine for the first project. However, there were multiple projects and they all started to use the same boiler plate code. In order to reuse code, I decided to refactor and make an extensible class to setup GLUT. My goal was to make it easy to extend the core behavior of the GLUT/OpenGL application.

As I refactored the code I decided to make a base class to perform all the GLUT setup. There's a first time for everything and I didn't realize the scope of this change until I was committed to it. I will summarize the problems and how to solve them. The code and explanations should provide the basic understanding of what is happening, but it will not compile as it is provided.

Problem 1: My initial attempt was to pass member functions to the GLUT callback functions. However, you can't pass member functions from a class directly to C callback functions. The GLUT callback functions expect a function signature exactly as function(BLAH) and I was giving it something that was function(this, BLAH). Where the "this" portion was the object passed under the hood.

class AnimationFramework {

public:

void display();

void run();

void keyboard(unsigned char key, int x, int y);

void keyboardUp(unsigned char key, int x, int y);

void specialKeyboard(int key, int x, int y);

void specialKeyboard(int key, int x, int y);

void startFramework(int argc, char *argv[]);

};

... in the setup function for GLUT

void AnimationFramework::startFramework(int argc, char *argv[]) {

// Initialize GLUT

glutInit(&argc, argv);

glutInitDisplayMode(GLUT_RGB | GLUT_DOUBLE | GLUT_DEPTH);

glutInitWindowPosition(300, 100);

glutInitWindowSize(600, 480);

glutCreateWindow("Animation Framework");

// Function callbacks

glutDisplayFunc(display); // ERROR

glutKeyboardFunc(keyboard); // ERROR

glutKeyboardUpFunc(keyboardUp); // ERROR

glutSpecialFunc(specialKeyboard); // ERROR

glutSpecialUpFunc(specialKeyboardUp); // ERROR

init(); // Initialize

glutIdleFunc(run); // The program run loop // ERROR

glutMainLoop(); // Start the main GLUT thread

}

Solution 1: Create static methods, and pass these static methods to the call back functions. All the logic for the AnimationFramework will go into these static methods. I've fixed the compiler errors, but it feels like a step backwards from what I set out to do.

class AnimationFramework {

public:

static void displayWrapper();

static void runWrapper();

static void keyboardWrapper(unsigned char key, int x, int y);

static void keyboardUpWrapper(unsigned char key, int x, int y);

static void specialKeyboardWrapper(int key, int x, int y);

static void specialKeyboardUpWrapper(int key, int x, int y);

void startFramework(int argc, char *argv[]);

};

... in a setup function for GLUT

void AnimationFramework::startFramework(int argc, char *argv[]) {

// Initialize GLUT

glutInit(&argc, argv);

glutInitDisplayMode(GLUT_RGB | GLUT_DOUBLE | GLUT_DEPTH);

glutInitWindowPosition(300, 100);

glutInitWindowSize(600, 480);

glutCreateWindow("Animation Framework");

// Function callbacks

glutDisplayFunc(displayWrapper); // NO ERRORS!

glutKeyboardFunc(keyboardWrapper);

glutKeyboardUpFunc(keyboardUpWrapper);

glutSpecialFunc(specialKeyboardWrapper);

glutSpecialUpFunc(specialKeyboardUpWrapper);

init(); // Initialize

glutIdleFunc(runWrapper); // The program run loop

glutMainLoop(); // Start the main GLUT thread

}

I've made a bit of progress, but when you think about it, static methods aren't much different from the plain C functions.

Problem 2: I want to have the functionality in the instance methods, yet I'm stuck with static methods. I'm not encapsulating the behavior inside instance methods, and I have no option for inheritance. I've still failed to hit the initial goal of creating an easy to use object oriented GLUT wrapper that's extensible.

Solution 2: I need to make virtual instance methods in the class and call them from the static callback functions. But I can't make the function calls directly, I need a static instance of the class to make the instance function calls. (Re-read, as it's a little complex) All I need to do is pass an instance of the class or subclass and I'll be able to extend the functionality. It's a little tricky/ugly, but it's the best method I've found for encapsulating C style GLUT into a C++ application.

class AnimationFramework {

protected:

static AnimationFramework *instance;

public:

static void displayWrapper();

static void runWrapper();

static void keyboardWrapper(unsigned char key, int x, int y);

static void keyboardUpWrapper(unsigned char key, int x, int y);

static void specialKeyboardWrapper(int key, int x, int y);

static void specialKeyboardUpWrapper(int key, int x, int y);

void startFramework(int argc, char *argv[]);

void run();

virtual void display(float dTime);

virtual void keyboard(unsigned char key, int x, int y);

virtual void keyboardUp(unsigned char key, int x, int y);

virtual void specialKeyboard(int key, int x, int y);

virtual void specialKeyboardUp(int key, int x, int y);

};

The static methods are implemented here:

// Static functions which are passed to Glut function callbacks

void AnimationFramework::displayWrapper() {

instance->displayFramework(); // calls display(float) with time delta

}

void AnimationFramework::runWrapper() {

instance->run();

}

void AnimationFramework::keyboardWrapper(unsigned char key, int x, int y) {

instance->keyboard(key,x,y);

}

void AnimationFramework::keyboardUpWrapper(unsigned char key, int x, int y) {

instance->keyboardUp(key,x,y);

}

void AnimationFramework::specialKeyboardWrapper(int key, int x, int y) {

instance->specialKeyboard(key,x,y);

}

void AnimationFramework::specialKeyboardUpWrapper(int key, int x, int y) {

instance->specialKeyboardUp(key,x,y);

}

The startFramework() method is the same as provided in the Solution 1.

EDIT: I left out details on how the instance was set. In my program, I subclassed AnimationFramework and created classes for each program I needed overriding the appropriate methods. As an example, KeyFrameFramework was a subclass in my project.

I have a function in my AnimationFramework.h

static void setInstance(AnimationFramework * framework);

AnimationFramework.cpp

AnimationFramework *AnimationFramework::instance = 0;

void AnimationFramework::setInstance(AnimationFramework *framework) {

instance = framework;

}

The main.cpp looks something like this:

int main(int argc, char *argv[]) {

AnimationFramework *f = new KeyFrameFramework(); // Subclass of AnimationFramework

f->setInstance(f);

f->setTitle("Key Framing:");

f->setLookAt(0.0, 2.0, 10.0, 0.0, 2.0, 0.0, 0.0, 1.0, 0.0);

f->startFramework(argc, argv);

return 0;

}

I set the instance variable of the AnimationFramework class to an AnimationFramework subclass called KeyFrameFramework. Doing so allows me to use polymorphism and call the appropriate functionality that is specific to each animation project. Note: Don't set the instance within a constructor, since the object is not fully initialized until the constructor is finished. You need to set the instance after your subclass object has been created.

Let me know if you have any questions. Below are the references I used.

References:

iPhone Default User Settings Null?

I wanted to set default values for my application using a Settings.bundle and I ran into an interesting issue with iPhone SDK 2.2. If you don't run the Settings application before your application runs for the first time, then the default settings are not set. It turns out that you'll need to manually set them on the first time the application is run.

Setting defaults to the default value...

I'm not sure why there isn't a function that can set the values for me, but after digging I found someone who ran into the same dilema. I don't want to have multiple locations with default values (code and a Settings.bundle), so I found a programmatic way to set all the default values if they haven't been set.

- (void)registerDefaultsFromSettingsBundle {

NSString *settingsBundle = [[NSBundle mainBundle] pathForResource:@"Settings" ofType:@"bundle"];

if(!settingsBundle) {

NSLog(@"Could not find Settings.bundle");

return;

}

NSDictionary *settings = [NSDictionary dictionaryWithContentsOfFile:[settingsBundle stringByAppendingPathComponent:@"Root.plist"]];

NSArray *preferences = [settings objectForKey:@"PreferenceSpecifiers"];

NSMutableDictionary *defaultsToRegister = [[NSMutableDictionary alloc] initWithCapacity:[preferences count]];

for(NSDictionary *prefSpecification in preferences) {

NSString *key = [prefSpecification objectForKey:@"Key"];

if(key) {

[defaultsToRegister setObject:[prefSpecification objectForKey:@"DefaultValue"] forKey:key];

}

}

[[NSUserDefaults standardUserDefaults] registerDefaults:defaultsToRegister];

[defaultsToRegister release];

}

All you have to do is call the above function if one of your Standard User Defaults returns null. Make the call once your application finishes loading like so:

- (void)applicationDidFinishLaunching:(UIApplication *)application {

// Get the application user default values

NSUserDefaults *user = [NSUserDefaults standardUserDefaults];

NSString *server = [user stringForKey:@"server_address"];

if(!server) {

// If the default value doesn't exist then we need to manually set them.

[self registerDefaultsFromSettingsBundle];

server = [[NSUserDefaults standardUserDefaults] stringForKey:@"server_address"];

}

[window addSubview:viewController.view];

[window makeKeyAndVisible];

}

References:

- http://stackoverflow.com/questions/510216/can-you-make-the-settings-in-settings-bundle-default-even-if-you-dont-open-the-s

- Display code in your Wordpress blog: http://wordpress.org/extend/plugins/wp-syntax/

[ad#Large Box]

Using SCM with SVN 1.6 and Xcode 3.1.2

Version control software is very important to use to keep track of changes. Today I was testing out the Xcode SCM (Software Configuration Management) integrated tools with SVN today and I had a few issues.

1. Xcode is trying to use the wrong dynamic libraries for SVN, if you update your SVN version to 1.6+. Since it's referencing the wrong libraries you will get an error similar to this one:

Error: 155021 (Unsupported working copy format) please get a newer Subversion client

I've seen this type of error when I upgraded my SVN and tried to use other SCM GUI software. To fix it I googled around and found some useful information at: Blind Genius Weblog

cd /usr/lib sudo mkdir oldSVN sudo mv libap*-1.dylib oldSVN sudo mv libsvn*-1.dylib oldSVN sudo mv libap*-1.0.dylib oldSVN sudo mv libsvn*-1.0.dylib oldSVN $ sudo ln -s /opt/local/lib/libap*-1.0.dylib . $ sudo ln -s /opt/local/lib/libsvn*-1.0.dylib . $ sudo ln -s /opt/local/lib/libap*-1.dylib . $ sudo ln -s /opt/local/lib/libsvn*-1.dylib .

Instead of removing the library files, I moved them into a new directory as backup.

2. Now Xcode can use the correct updated libraries from SVN 1.6+, so I moved on to the next task of adding an existing project to the SVN repository. The tutorial at jms1.net was helpful in refreshing my memory for the SVN commands.

Make a project in Xcode and then use Terminal and execute the commands. If you aren't familar with SVN check out the documentation.

cd LOCAL_PROJECT_PATH svn mkdir SVN_REPOSITORY_LOCATION/PROJECT_NAME svn co SVN_REPOSITORY_LOCATION/PROJECT_NAME . svn add * svn revert --recursive build svn ps svn:ignore build . svn ci

The commands create a folder in your SVN repository. Next it checks out the remote repository folder into the local project folder and add all of the project files. Once the files are "added" you'll want to remove the build directory and ignore it from your SVN repository. Lastly it'll commit the changes and you're project is in the repository.

3. The project is in the repository and Xcode is using the latest version of SVN. You can use the SCM tools in Xcode to manage the project.

[ad#Large Box]